GSA SER Verified Lists

Understanding GSA SER Verified Lists and Their Impact on Link Building

Anyone who has spent time mastering GSA Search Engine Ranker knows that the software is only as powerful as the data it is fed. At the core of every efficient campaign sits a meticulously curated resource: GSA SER verified lists. These are not just random collections of URLs. They represent pre-tested, confirmed platforms where the software can successfully post content and create accounts without wasting system resources on dead ends.

What Exactly Defines a Verified List?

A verified list is the end product of a rigorous filtering process. When GSA SER scrapes the web for targets, it pulls millions of potential URLs. The raw scraped data is filled with noise—blogs that have closed, forums with registration disabled, or platforms that block automated submission. A verified list has been run through the engine’s own test mode or third-party checkers to confirm that the target still accepts submissions. Each entry on a GSA SER verified lists package typically includes the exact sign-up or posting URL, making the engine’s job instantaneous rather than exploratory.

The Performance Gap Between Verified and Unverified Targets

Using unverified global site lists creates a bottleneck. The software spends CPU cycles trying to post to dead domains, timing out repeatedly, and filling the log with errors. This slows down the overall submission rate significantly. Switching to GSA SER verified lists shifts the bottleneck from verification to raw throughput. A campaign that might have achieved 30 verified links per day on a raw list can suddenly jump to 150 verified links in the same timeframe because the software is no longer wasting time pinging ghost URLs.

How High-Quality Lists Are Built and Maintained

The lifespan of a verified target is surprisingly short. A platform that works perfectly today might install a security update or be abandoned by its owner tomorrow. This is why static lists fail quickly. The best sources for quality verified lists GSA SER GSA SER verified lists treat verification as an ongoing cycle. The pipeline usually involves scraping massive keyword-based footprints, checking the engine type (WordPress, Drupal, PHPFox), and then launching automated verification passes. Only the URLs that return a successful submission acknowledgment survive the cut. Top-tier providers repeat this cycle weekly to ensure temporal relevance.

Engine-Specific Segmentation for Tiered Link Building

One of the most critical factors in selecting a list is not just that it is verified, but that it is segmented by platform type. A generic dump of verified URLs offers no strategic control. Advanced GSA SER verified lists are categorized into engines such as Article Directory, Social Network, Wiki, Web 2.0, and Guestbook. For a user building a Tier 1 layer, a list rich in Web 2.0 and social network engines is mandatory because these carry contextual value and trust. Conversely, Tier 2 and Tier 3 blasts rely on bulk comment-based or trackback verified lists to push indexation and link juice upward without inflating the risk profile of the money site.

Contextual Footprints and Relevance

Verification alone does not guarantee a quality backlink. The concept of relevance has evolved, but in the context of automated link building, platform relevance matters. A verified list rich in targeted footprints—sites that relate specifically to a niche—yields much higher content cohesion. Expert list curators integrate contextual filtering by running post-verification keyword scans. They check if the domain title or URL path contains niche-specific terms. When you acquire niche-specific GSA SER verified lists, the resulting links sit on pages where the surrounding digital environment does not send conflicting semantic signals to search engines.

Avoiding the Spam Trap

A verified target is not automatically a safe target. Badly compiled GSA SER verified lists often include high OBL (Outbound Link) domains, heavily spammed pages that have been penalized algorithmically. The mark of a useful list is the inclusion of platform metrics. A refined list strips out domains that appear on blacklists or exhibit a spam score above a defined threshold. This pruning process transforms a dangerous bulk list into a safer mid-tier asset. Using such a clean list minimizes the need to constantly run GSA SER’s built-in spam filter at maximum aggression, which itself can throttle performance.

Integration with Captcha Solving and Indexing

The true meter of success for GSA SER verified lists is the final indexation rate. Verified posting is pointless if the created link does not get crawled by Googlebot. Premium lists often include platforms where new content triggers an automatic ping to Google. Moreover, these lists are designed to work in tandem with text and image captcha solvers. Since the platforms are known and active, the captcha challenges tend to be standard (Recaptcha v2, text captchas) rather than exotic custom capture systems that break automated solvers. This harmonization ensures that verification leads not just to a successful submission, but a live, indexable entry point.

Where to Source Reliable Verified Data

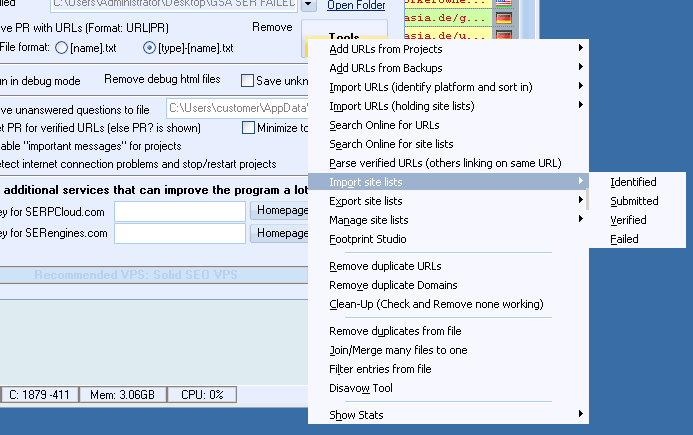

Services that specialize in GSA SER training and tools frequently offer updated monthly subscriptions for these lists. Purchasing a one-time list from a random forum is rarely cost-effective because the data decays within days. A subscription model provides a rolling set of fresh targets. Additionally, sharp users build their own internal repository by running a dedicated "prospecting" instance of GSA SER that perpetually searches and verifies targets, exporting only the successful hits. This self-made approach guarantees that the GSA SER verified lists are completely unique and unshared, removing the competitive overlap factor that plagues publicly sold lists.

The Future of Automated Verification

As web platforms evolve to be more dynamic, the definition of verification is shifting from simple HTTP status checks to behavioral analysis. A URL might load in a browser but block the headless POST request. Modern list verifiers now emulate browser fingerprints to ensure the platform genuinely accepts the submission, not just a 200 OK response. For users seeking to maintain an edge, focusing on GSA SER verified lists that pass this deeper level of behavioral verification is no longer optional; it is the dividing line between a link that sticks permanently and one that disappears in a matter of hours.